For much of the past decade, the dominant form of child abuse material online involved the redistribution of existing content. Detection strategies reflected that assumption: most harm was thought to stem from recirculation, and scanning for known digital fingerprints was considered sufficient to manage risk exposure.

That assumption no longer holds.

As Europol noted in its 2024 Internet Organised Crime Threat Assessment, the online abuse landscape is shifting from a “collector” ecosystem to a “producer” one. Children are increasingly being groomed or extorted to generate imagery, while generative AI enables offenders to create novel or synthetic content at a scale never seen before. NCMEC’s 2024 data reflects this shift, reporting a 1,325% increase in CyberTipline reports that involved generative AI technology.

For Trust & Safety and product leaders, this introduces a core operational challenge. Human review is unsustainable at scale and presents long‑term psychological risk to teams. Hash‑based detection, while still essential, cannot identify content that hasn’t been previously seen or fingerprinted. Novel, modified, or synthetic material lacks the known fingerprints older systems rely on, and often appears faster than current systems can surface it, let alone respond to it.

Under frameworks such as the UK Online Safety Act and the EU Digital Services Act platforms are no longer assessed solely on whether they remove known harmful content. They are expected to identify emerging risks, apply proportionate detection measures, and demonstrate how those measures operate in practice. In the UK, Ofcom is specifically exploring whether expectations around the detection of previously unknown child sexual abuse material (CSAM) may form part of its proposed Additional Safety Measures.

As a result, safety is no longer defined only by what platforms take down after the fact, but by what they can detect and evidence before harm escalates. The ability to identify previously unknown CSAM is moving from a specialist capability to a baseline platform requirement, critical to protecting users, managing institutional and executive risk, and operating defensibly in an active enforcement environment.

The operational and compliance risk of traditional CSAM detection

That operating model is now under pressure in two distinct ways, driven by both scale and novelty, with clear implications for how platforms are assessed.

|

First, hash‑based detection is structurally limited to content that still matches a known digital fingerprint. Novel imagery, synthetic content, and even minor, non‑visual changes can break that match, allowing visually identical material to evade traditional detection systems. |

|

|

Second, human‑led workflows cannot scale to the volume, velocity, or ambiguity of current harms. Review teams face increasing throughput and uncertainty, placing limits on operational sustainability, consistency in decision‑making, and long‑term team wellbeing. |

As a result, platforms now face a growing expectation to explain and evidence how detection decisions are made. Regulators increasingly expect platforms to describe how risk is surfaced when no prior match exists, how decisions are reviewed, and how effectiveness is monitored over time — including through transparency and disclosure reporting. Detecting previously unseen content is now inseparable from explaining how and why action was taken, and from demonstrating that detection approaches are governed, effective, and able to withstand scrutiny.

What it takes to detect previously unknown CSAM

Detecting previously unknown child sexual abuse material rests on a different foundation than traditional approaches. This is not a matter of adding more tools or pushing existing systems harder. It depends on mandate, access to appropriate data, and the governance conditions that determine how that data can be handled responsibly.

This is where the distinction between platforms and law enforcement becomes decisive. Platforms cannot lawfully access or retain child abuse material — and nor should they. That boundary is fundamental to user protection, but it also constrains how systems designed to identify previously unseen material can be built, trained, and validated in a commercial environment.

Law enforcement operates under a different mandate. Child abuse material is handled in secure environments, by cleared specialists, and as part of criminal investigations. The datasets created in that context are assessed to evidential standards, with material consistently classified by severity, victim age, and offense type. This produces training data that reflects real‑world abuse patterns and supports accountability under legal scrutiny.

Recognizing this, the UK Home Office has established a controlled framework that allows cleared private‑sector specialists to contribute to child protection efforts using the Child Abuse Image Database (CAID). At a high level, defensible detection depends on integrating known‑match detection, the ability to surface previously unseen material, and human review into a single operating flow.

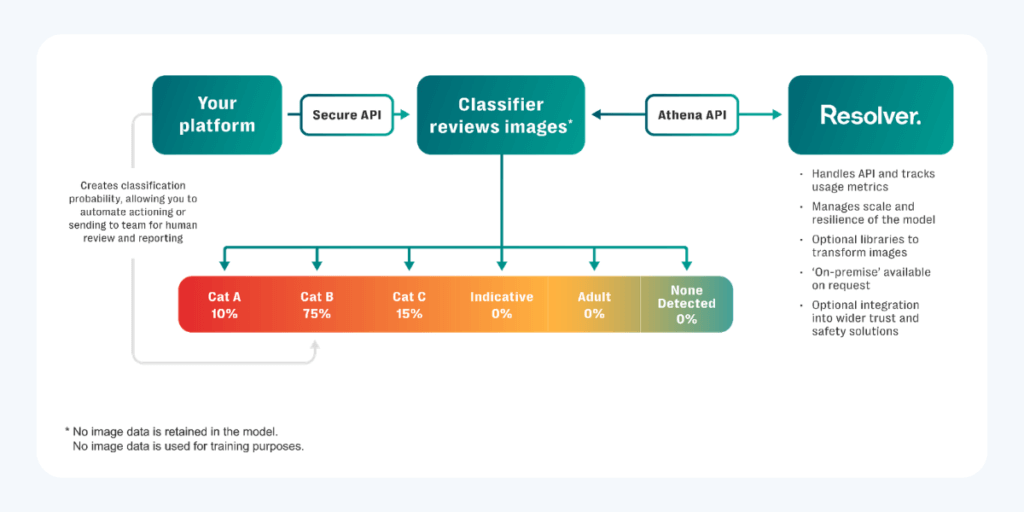

Illustrative flow showing how known and previously unknown CSAM can be assessed and routed for review without retaining image data.

Athena, Resolver’s end‑to‑end CSAM detection service, has been developed within that framework, providing a lawful basis for building detection capability without expanding access to sensitive material beyond those already authorized to handle it.

The implication is straightforward. Detecting previously unknown CSAM requires alignment between mandate, expertise, and access. Without that alignment, detection systems are constrained by design. With it, it becomes possible to develop capabilities that are both operationally effective and legally defensible.

What defensible CSAM detection enables in practice

When previously unknown CSAM can be detected on a defensible basis, the Trust & Safety operating model changes in ways that go beyond coverage or speed.

First, detection moves earlier in the content lifecycle. Rather than relying primarily on retrospective review, risk can be surfaced closer to content creation or upload, where intervention has the greatest potential to prevent distribution. This enables earlier action with clearer justification, instead of relying on post‑hoc review after harm has already spread.

Second, human judgment becomes more targeted. Review does not disappear, but it is applied more selectively. Instead of processing large volumes of undifferentiated material, teams can focus on cases where severity, uncertainty, or escalation genuinely require specialist assessment. Over time, this reduces unnecessary exposure and supports greater consistency as ambiguous or synthetic content becomes more common.

Defensible detection also enables more nuanced risk decisions. When outputs are consistent and traceable, platforms can move beyond binary outcomes and apply proportionate responses based on confidence, severity, and contextual indicators. This supports clearer internal oversight, particularly where trade‑offs between safety, user experience, and enforcement obligations must be managed deliberately.

Finally, it changes how platforms account for their decisions. When no prior match exists, the ability to show how risk was identified, how thresholds were set, and how outcomes were reviewed becomes critical. Defensible unknown‑CSAM detection provides the evidentiary basis for that accountability, allowing platforms to explain not just what action was taken, but why it was reasonable at the time.

Taken together, these shifts move detection from a reactive safeguard to a governed capability. They allow platforms to act earlier, while meeting expectations that decisions involving the most serious harms can be understood, audited, and justified under scrutiny.

Why detection now sits at the center of platform governance

What has become clear is that detection can no longer be treated as a narrow technical control or a retrospective safeguard. When platforms are expected to evidence how risks were identified, assessed, and acted on in the absence of a prior match, detection becomes part of governance.

For Trust & Safety teams, this shifts the question from whether harm can be addressed once surfaced to whether decisions can be explained, justified, and defended under scrutiny. That requires approaches that align legal mandate, specialist expertise, and operational reality — not simply greater scale or speed. As enforcement environments continue to mature, platforms that invest in defensible detection capabilities are better positioned to act earlier, reduce harm, and account for their decisions when it matters most.

For a more detailed explanation of how defensible CSAM detection is implemented in practice, see the Athena service overview.

About the author: Frances McAuley is Director of Product in Resolver’s Trust & Safety Division, where she leads the development of intelligence‑led capabilities supporting regulated platforms across online safety, compliance, and enforcement.