Benchmarking has become common in ethics and compliance programs. Boards, audit committees, and program leaders often look to peer comparisons when evaluating effectiveness. Over time, those comparisons have become one reference point for assessing program maturity and overall health.

Many leaders look to benchmark data as reassurance that their program is performing as expected. If reporting volume aligns with peers, the hotline appears healthy. If case closure time falls within industry ranges, investigations seem effective. If substantiation rates look typical, oversight appears to be working.

That logic feels reasonable. It also skips a step between the numbers and what they actually explain.

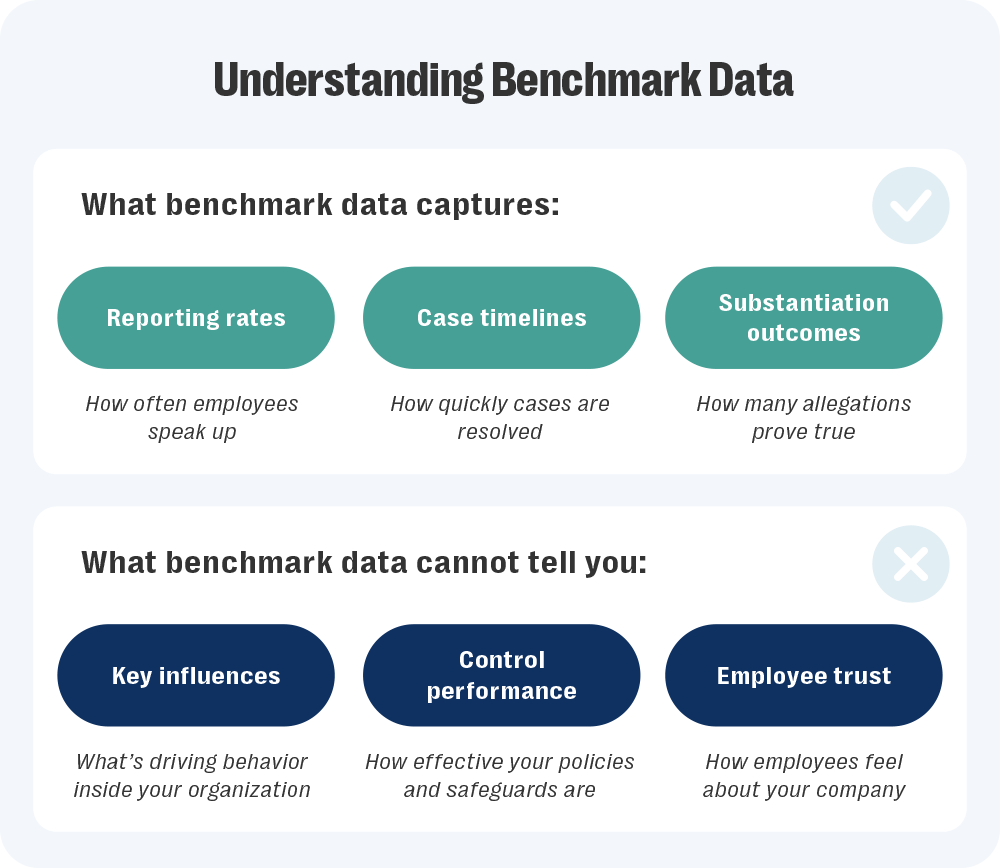

Ethics and compliance benchmarking compares your numbers to someone else’s — reports per 100 employees, median case duration, percentage of anonymous submissions — and shows where you rank. A high reporting rate could signal trust in the system. It could also reflect unresolved misconduct patterns. A low rate could reflect strong culture — or silence. Without context, benchmark data creates confidence faster than it creates understanding.

That gap is where misplaced certainty begins. In this blog, we’ll examine how leaders interpret benchmark results, where misplaced confidence creeps in, and how to use comparison data as a judgment tool instead of proof.

What benchmarking measures — and why it became the baseline

Ethics and compliance benchmarking starts with a simple question: How do our numbers compare to peer organizations?

Industry groups and consulting firms collect data across similar organizations and package it into ranges and medians. Reporting volume gets scaled to account for company size. Case timelines and substantiation outcomes are grouped within sectors, so comparisons don’t mix unrelated programs.

The result is a snapshot of where your program sits in the broader field:

- Are case closure times faster or slower than those of peer organizations?

- Do substantiation rates or anonymous reporting levels fall within the expected range?

- Do retaliation allegation levels align with industry trends?

That view gives leaders something solid to reference when scrutiny increases. But the outward focus is part of why benchmarking became standard.

Boards want reassurance that nothing looks out of line. Regulators often examine whether programs sit within reasonable bounds. Peer comparison offers a quick way to answer both. If reporting volume falls near the median, concern softens. If case timelines match sector ranges, investigations appear steady. If substantiation rates align with comparable organizations, oversight seems consistent.

Those signals travel well in high-stakes conversations.

Over time, that convenience shaped behavior. Dashboards began pairing internal metrics with industry ranges. Annual reviews led with external alignment. Leaders started scanning for deviation before digging into cause. Comparison becomes the first lens, and once it’s in place, typical starts to feel sufficient.

Benchmark data answers one question well: How do your metrics stack up against peers? That clarity has value because it can surface outliers and flag patterns that deserve closer review, especially when a metric drifts beyond expected ranges. Used properly, ethics and compliance benchmarking helps frame oversight conversations and gives leaders shared context when numbers draw attention.

What comparison does not do is settle judgment — or substitute for it. It frames the discussion, but it does not resolve what those numbers mean inside your organization or how they would withstand deeper scrutiny. The distinction becomes clear once leaders start asking what benchmarking can legitimately support, and where its limits begin.

|

5 Roadblocks to Scaling an Ethics & Compliance Program — and How to Fix ThemLearn how to move beyond fragmented investigations and low engagement to build a program that earns trust and scales with confidence. |

What benchmarking can (and can’t) support

Benchmarking is strongest when used as a calibration tool.

It helps leaders understand whether internal reactions are proportionate. A sudden change in reporting volume may feel alarming, but peer data can show whether similar shifts are occurring elsewhere. A longer investigation cycle may raise concern, yet sector patterns can reveal whether the delay reflects broader operational strain rather than isolated failure.

That perspective prevents overcorrection.

Ethics and compliance benchmarking can also support credibility in oversight conversations. When directors ask how the program compares, external reference points provide grounding. They demonstrate awareness of market practice and show that metrics are not reviewed in isolation. Where benchmarking stops is proof of effectiveness.

Comparison does not confirm that controls operate as intended. It does not validate investigative quality. It does not demonstrate cultural strength. Those conclusions require internal evidence, not external alignment.

Benchmarking highlights position. Judgment still requires analysis.

How operating conditions shape benchmark results

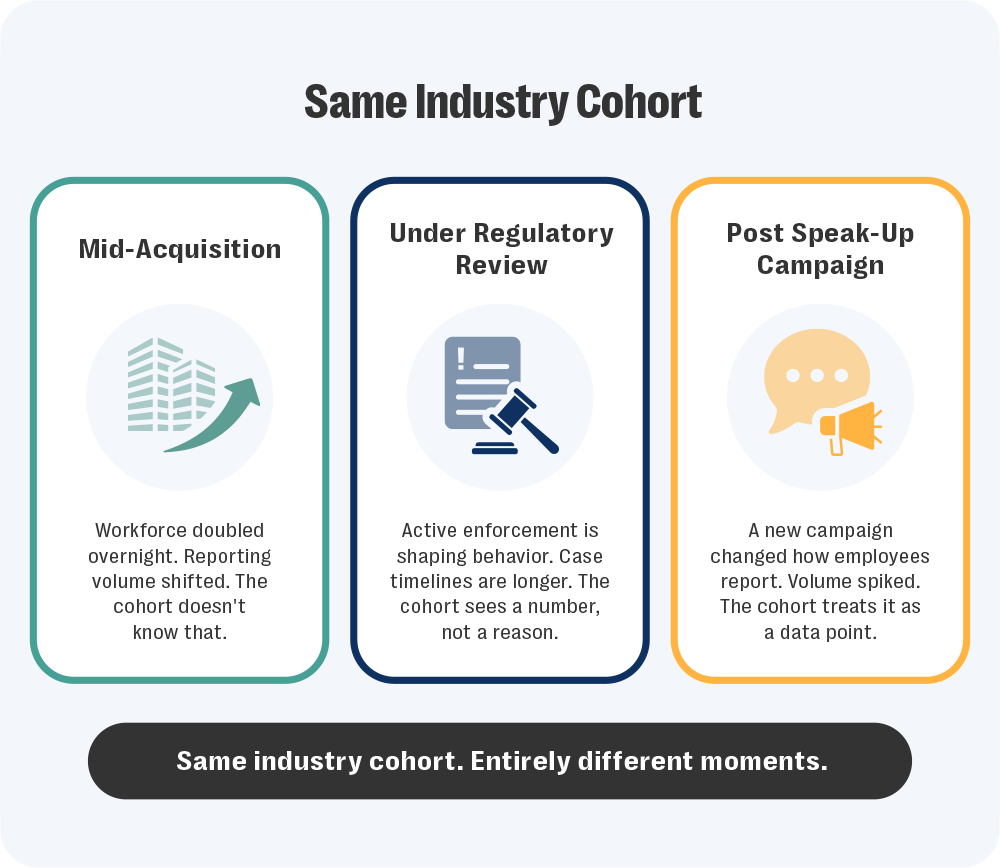

Benchmark cohorts are built to create structured comparison. Organizations are grouped by industry, company size, geography, or reporting volume so their metrics can be evaluated within a shared band. The goal is consistency. Comparing like-sized financial institutions produces more useful ranges than comparing a global bank to a regional manufacturer.

Cohort design controls for structural differences. What it cannot control for are operating conditions inside those organizations.

Two companies in the same industry band may sit in very different moments. One may be integrating an acquisition that doubled its workforce. Another may be under active regulatory review. A third may have launched a new speak-up campaign that changed reporting behavior overnight. All three remain in the same cohort. Their internal pressures are not the same.

Cohorts make structural comparison possible. They don’t reflect the moment your program is operating in. Growth, disruption, leadership change, or regulatory pressure all shape how metrics move, even when the cohort remains the same.

Leaders who overlook those conditions risk treating alignment as reassurance or deviation as failure without asking what actually drove the shift. When internal context stays part of the analysis, benchmark data strengthens oversight rather than narrowing it.

Using ethics and compliance benchmark data to strengthen oversight

Benchmark data carries more weight once it leaves the analytics dashboard.

In board and oversight discussions, numbers rarely stay neutral. A dip in reporting volume invites concern about trust. Extended case timelines raise questions about investigative rigor. Shifts in substantiation patterns draw attention to thresholds and controls. The interpretation happens quickly, often before internal context is discussed.

That is where leadership judgment shows.

External ranges can ground the conversation, but they cannot carry it. What matters is how leaders explain the forces behind the movement. Growth cycles, enforcement activity, cultural shifts, and resource strain all shape metrics in ways peer distributions cannot show. Oversight improves when comparison sets context, while internal evidence provides substance. When those roles stay distinct, benchmark results sharpen inquiry rather than narrow it.

Used that way, ethics and compliance benchmarking strengthens governance. Used carelessly, it compresses complex realities into simple reassurance.

|

See Resolver’s Whistleblowing & Case Management Solution in ActionOur solution automates workflows, ensures secure and confidential hotline reporting, and speeds up compliance investigations — all in one platform. Protect whistleblowers, reduce risk, and resolve cases faster while meeting global regulations. |

Go from benchmarking to case-level clarity with Resolver

Benchmark data raises questions that leaders still need answers to.

Those answers live inside your hotline reporting process, your triage decisions, and your investigation workflows. Without visibility into how cases move, why thresholds shift, or where delays occur, peer comparison remains surface-level.

Resolver’s Whistleblowing & Case Management solution connects peer context to internal evidence. Structured case intake captures reporting patterns in real time. Intelligent triage helps teams prioritize higher-risk reports and route them to the right investigators. Flexible workflows adapt to regulatory requirements without IT support. AI Case Summarization and Dashboards help translate case activity into board-ready reporting.

Ethics and compliance benchmarking shows where you stand. Resolver shows why.

Through our partnership with Ethics and Compliance Initiative (ECI), external benchmarking pairs with hotline reporting, end-to-end case management, investigation tracking, and analytics inside a single platform. The result is oversight that withstands scrutiny.

If you’re ready to move beyond peer alignment and pressure-test your program, speak with our team to see how Resolver and ECI bring operational visibility to your benchmarking.