Generative AI is supercharging the creation of child exploitation content

Generative AI is a force multiplier for malicious actors targeting children using online platforms and services. The growing sophistication and accessibility of tools employing generative technology has enhanced the speed, scale and capabilities of offender groups to produce and disseminate AI Generated Child Sexual Abuse Material (AI-CSAM). This harmful synthetic content is then exploited for both commercial gain and their own sexual gratification.

Simultaneously, the proliferation of cross-platform offender communities where users share techniques and tradecraft to create Artificial Intelligence-CSAM (AI-CSAM) provide an entry point for new offenders and a pathway to profit from the synthesis of such harmful content. The same communities allow users to request bespoke images or models, sell explicit images and sexual services and even crowdsource prompts and techniques to bypass in-built moderation systems on commercially available generative tools.

Taken within the context of a broader surge in the accessibility of CSAM across a range of online platforms, the additional time and resources required to detect and process AI-CSAM content is likely to create several additional technical, operational and regulatory challenges to organizations tasked with safeguarding children online.

Generative AI used to create photorealistic CSAM

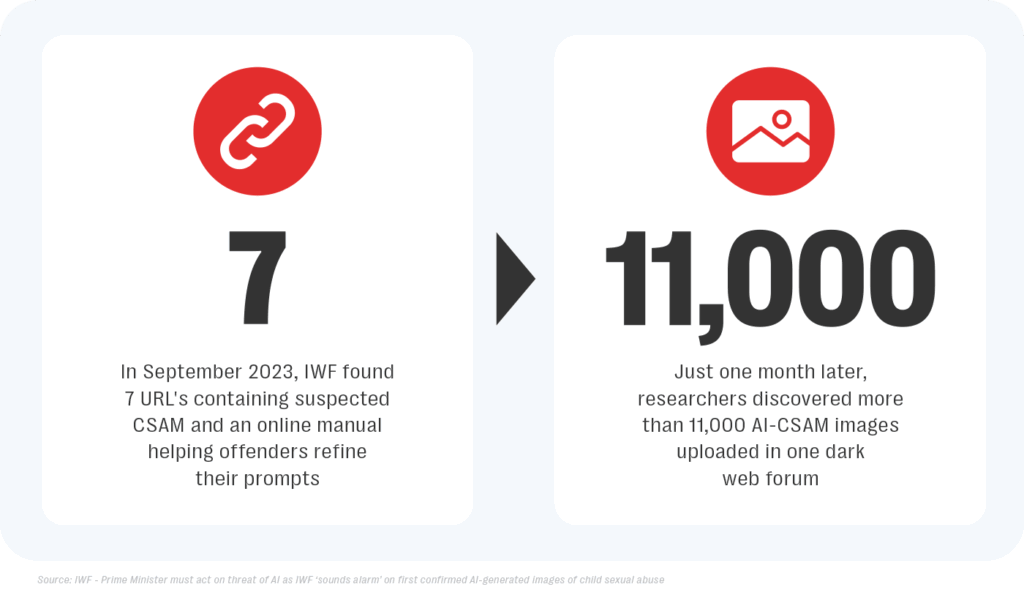

Since early 2023, incidents of predators using popular generative AI services to create photo-realistic CSAM have been increasing steadily. In September 2023, the Internet Watch Foundation (IWF) raised the alarm after discovering seven URLs containing suspected AI-CSAM and an online “manual” helping offenders refine their prompts and synthesize more realistic imagery on the open web. This content included explicit depictions of both male and female children as young as three to six years old.

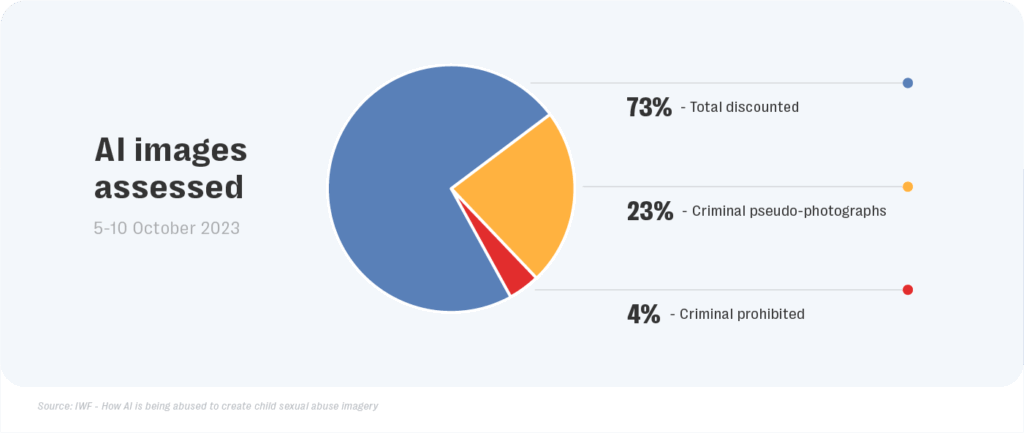

A subsequent report published by the IWF in October reviewed more than 11,000 AI-CSAM images that had been uploaded in one dark web forum over a four week period. IWF analysts found 2,978 images to be criminal under UK law (based on the appearance of being a real photograph of a child) while ‘six times as many images were assessed as realistic pseudo-photographs (images that depicted minors in sexual situations that had signs of inauthenticity) than were assessed as non-realistic prohibited images’. This finding underlines the sophistication of violative content currently being distributed among offender communities online.

Months later, researchers at Stanford uncovered more than 1,600 images of suspected CSAM in LAION-5B, a large training dataset used to train several popular visual generative services. The analysis concluded that access to this dataset ‘implied the possession of thousands of illegal images, including intimate imagery published and gathered non-consensually’ and highlighted that ‘if a model is trained using data that includes CSAM, its weights might replicate or even generate new CSAM’.

Recently, in January 2024, officials at the U.S National Center for Missing and Exploited Children (NCMEC) informed Reuters that their organization had received 4,700 reports of AI-CSAM in 2023 adding that the ‘figure reflected a nascent problem that is expected to grow as AI technology advances’.

Accessibility of AI-CSAM causes social harm

By allowing child predators to produce hundreds of AI-CSAM images with the click of a button, the misuse of generative technology can fuel offensive behavior, contribute towards normalizing the sexualisation of minors, and increase the risk of involuntary exposure to such harmful content on the surface web. This threat is reiterated by Danielle Williams, Child Endangerment, Suicide and Self Harm Lead at Resolver, a Kroll business who warns that such technology “provides huge scalability in terms of the production of content of children for sexual gratification”.

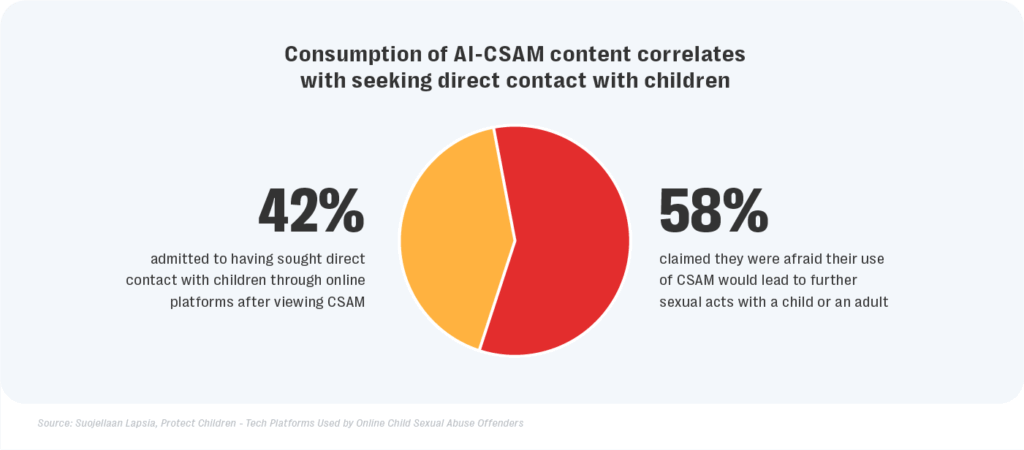

The regular consumption of AI-CSAM content can also increase the risk of more egregious forms of harm. Research conducted by Protect Children that surveyed individuals searching for CSAM material on dark web engines found that the consumption of such content was ‘strongly correlated with seeking direct contact with children’. Out of 1,546 anonymous individuals surveyed, nearly half (42%) of respondents admitted to having sought direct contact with children through online platforms after viewing CSAM, while 58% claimed they were afraid their use of CSAM would lead to further sexual acts with a child or an adult.

The increased volume and “realism” of synthetic CSAM being disseminated across online platforms creates complications for platforms employing hash-matching technology to automate the detection of harmful content on their platform. In particular, the system will be unable to flag new AI-CSAM images as they rely on comparing the imagery to a database of known and hashed CSAM images. Bereft of a solely automated solution, the burden of manually investigating the authenticity of individual images will likely increase the time required for enforcement agencies to investigate and process the authenticity of the victim depicted in the image, prolonging the suffering of real victims in the meantime.

Exploiting closed-source and open-source GenAI models

The availability of open-access text-to-image models and online repositories where users can access how-to-guides, datasets of training images and even pre-weighted models trained to output imagery depicting harmful sexual acts allows malicious actors to download everything they need to generate AI-CSAM offline, with less opportunity for detection.

In contrast, closed-source models employed by mainstream text-to-image services have incorporated multiple safeguards to deter the misuse of their platforms by offender groups. These moderation systems attempt to disallow violative content (like violence or pornography) by either limiting the dataset used to to train their algorithms or limiting the prompts that can be used to generate images using their services. Other approaches to moderation include analyzing the output material before presenting it to the user, however this methodology can also be costly and lead to a bad user experience. Despite such robust measures, malicious actors are constantly developing new tactics aimed at circumventing these in-built moderation systems.

Some of the most commonly employed exploits by perpetrator communities target vulnerabilities in the language coverage offered by particular generative tools by experimenting with explicit prompts in different languages. Other techniques involve the use of niche expressions, keywords, references to specific AI-CSAM creators and predator manuals or a successive combination of prompts to steer the model towards generating violative content.

AI-CSAM distributed across range of online platforms

Offenders also leverage social media, pornography websites, gaming platforms, file hosting repositories and encrypted messaging applications to create cross platform communities dedicated to generating AI-CSAM, sharing instructional content and distributing and selling harmful images. Members of such communities also misuse social media and gaming platforms to search for vulnerable children to groom or sexually extort.

With regards to the latter, the use of technologies can also aid in the financial sextortion of minors with Danielle Williams warning that the use of AI “provides another round of scalability through the use of chatbots and image generators”. As a result, “a minor no longer has to be persuaded to send an indecent image of themselves, as AI can declothe any freely available image of that child.”

In June 2023, the BBC reported on a network of accounts on a variety of online platforms that profit from the distribution of AI-CSAM. The investigation revealed how creators promote their content using hashtags and posts on image sharing websites hosted in countries where sharing sexualized cartoons of children is not illegal, before redirecting users to other accounts hosted on popular content-sharing websites where they are charged a subscription fee for “uncensored” access. Creators using such accounts are reported to produce thousands of images every month, with some also sharing links to real-life CSAM.

Major tech platforms and gaming platforms enforce community standards that proactively ban CSAM, however difficulty distinguishing between synthetic media and real-life content can complicate the efforts of moderators to categorize and report the increased volume of AI-CSAM imagery. Moreover, perpetrators often employ associated accounts on encrypted messaging applications, smaller platforms with lax moderation, and decentralized networks where servers are hosted in countries where such content is not currently illegal to skirt enforcement.

Conclusion

The threat posed by the misuse of generative technologies by bad actors to produce AI-CSAM will magnify over time as rapid innovations in the field continue to decrease the resources required to generate explicit imagery, while vastly improving the realism of this synthetic content. In the near term, solutions such as removing sexual content from training data used by generative tools, biasing models against generating images depicting child nudity and watermarking synthetic content to facilitate detection can help mitigate against the misuse of AI to create violative content.

Our Platform Trust and Safety solution offers a fully managed service that allows platforms to enhance their community safety with integrated content, bad actor detection and external intelligence offering partners comprehensive protection against emerging online threats. Working with many of world’s leading Generative AI platforms, Resolver, a Kroll business provides red teaming exercises, strategic intelligence reporting and rapid alerting of emerging threats including child safety risks, suicide, self-harm behaviour and other high harm activities.