Online service providers are now being held accountable not just for individual pieces of content, but for how their systems shape risk over time.

After Netflix’s award-winning series Adolescence brought the manosphere’s influence on young boys to mainstream attention, Louis Theroux’s Inside the Manosphere has put the men behind these communities on screen — the influencers, the ideology, and the insecurity beneath them. What the documentary shows less clearly is the audience: the boys being shaped by this content, one video, one community, and one quietly reinforced idea at a time.

Under the UK’s Online Safety Act (OSA), platforms are now legally required to assess and document exactly that risk. Ofcom’s enforcement powers are active, and it has already issued fines where providers could not demonstrate that they had carried out suitable and sufficient risk assessments.

“Hate content targeting women and girls sits squarely within Ofcom’s priority harm categories,” says Mark Phillips, subject matter expert (Hate) at Resolver Trust & Safety. “Platforms that haven’t documented how that risk develops on their service are already exposed — including through content that doesn’t trigger a single policy violation in isolation but contributes to a documented harm pathway over time.”

The question platforms now face is not whether these ecosystems exist. It is what they are legally expected to do about them.

Risk assessment is now the accountability test

Under modern online safety regimes, including the UK’s Online Safety Act, the standard is no longer whether harmful content was eventually removed. It is whether a service provider can demonstrate a proactive, evidence‑based understanding of how harm develops on its service — including through recommendation systems, community dynamics, and the cumulative effect of repeated exposure. A pathway you cannot evidence is a risk you cannot defend.

A provider that cannot show it understood how a misogynistic harm pathway was developing before it became the subject of an enforcement inquiry, public scrutiny, or internal escalation faces real regulatory exposure. That exposure includes harms targeting women and girls, which regulators have explicitly identified as a priority category. The gradual, community‑driven radicalization depicted in recent documentaries reflects the type of systemic risk these frameworks are designed to surface.

In its first year of enforcement, Ofcom challenged multiple providers over inadequate risk assessments, including cases where risk levels were set too low. Ofcom has made clear that risk assessment records must already exist and capture how risks were identified, assessed, and reviewed. They cannot be reconstructed after the fact.

Under the Act, Ofcom has the power to require information, commission audits, and issue fines based on whether a service provider’s risk assessments are adequate and its safety measures proportionate. Since enforcement began, those powers have been applied across a range of services, including large mainstream platforms and smaller, higher‑risk providers.

In March 2026, Ofcom set binding deadlines for further action, with explicit warnings that noncompliance will trigger enforcement. Risk assessment records for illegal content and children’s safety are due between May and July 2026. For providers that cannot evidence how risks like this one were identified and assessed, that deadline is not administrative. It is a legal exposure.

Adequacy is not self‑declared. If a regulator asks how a service understood this risk — and when — the answer must already exist, in writing, and independent of whatever incident prompted the question. With year‑two risk assessment records due between May and July 2026, that question is no longer hypothetical.

Misogyny is socialized before it becomes explicit

“The ‘manosphere’ as a term describes an increasingly varied number of communities and online spaces, each with specific ideologies and focal points,” says Phillips. “Treating it as a single phenomenon risks overlooking the specific dynamics of individual communities, and makes it harder to build policies that effectively mitigate the risks each one poses.”

What these pathways have in common is that they rarely begin with content that would trigger enforcement or moderation on its own. They often start in spaces that, by content‑policy standards, appear ordinary: self‑improvement content, fitness and lifestyle advice, discussions about relationships, or communities addressing loneliness, rejection, or uncertainty about identity and status. None of this content is inherently harmful in isolation.

“There is rarely a single entry point into these communities,” Phillips notes. “The pathway can begin with entirely ordinary searches — advice on social skills, career questions, even religious inquiry — that gradually surface manosphere creators through recommendation and engagement signals. One of the most consistent patterns we observe is the journey from mainstream platforms to encrypted messaging applications, where users enter echo chambers with exposure to increasingly harmful narratives.”

How harm pathways encode meaning across communities

The examples below illustrate how seemingly benign terms, emojis, or communities can signal deeper ideological alignment within these pathways.

| 💊 Red pill “Waking up” to perceived anti-male bias |

⚫ Black pill Nihilism; no hope of change |

| 🫘 Kidney bean Subtle incel self-identifier |

💪 Flexed bicep Male dominance, gym culture, high status |

| 🐍 Snake Insult for men who support gender equality |

👑 Crown Male supremacy; “men are kings” |

In Resolver’s work, this progression often appears incremental to both users and service operators. Content that might once have felt marginal or extreme becomes familiar through repetition. That gradual shift is precisely what makes this category of risk difficult to identify using post‑level detection alone. By the time misogynistic content reaches a threshold for enforcement action, the pathway that produced it may have been developing for months. In many cases, that development is not reflected in risk‑assessment records regulators will later ask to see.

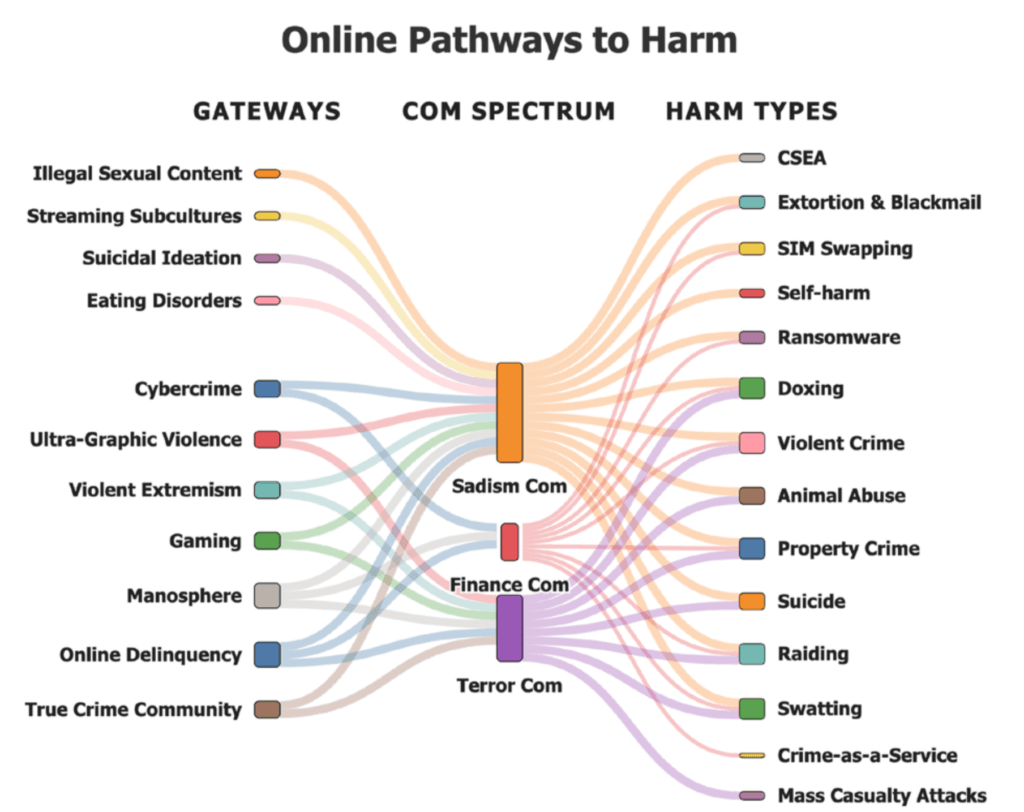

The manosphere is rarely the endpoint. Resolver’s research into hybrid online harm ecosystems shows it functioning as a gateway into more severe environments, where radicalization, exploitation, and coordinated harm intersect. Understanding how these pathways form — rather than focusing solely on individual pieces of content — is essential to meeting Ofcom’s risk‑assessment obligations.

Gateway communities and behaviours converge into shared ecosystems that generate multiple downstream harms, as identified in Resolver’s Weaponised Loneliness report. Pathways like the manosphere don’t operate in isolation, but rather intersect and compound risk across multiple harm categories.

The harm pathway problem detection can’t solve

Detection systems are designed to assess content at the point of review: a post, a comment, a video clip, each evaluated against a set of rules. That approach works well when violations are clear and contained.

Pathway harms operate differently. The risk isn’t in any single piece of content. It’s in the cumulative narrative that emerges when content is encountered repeatedly, in sequence, within a community that reinforces it. Individual signals may be unremarkable. The harm is in the direction of travel.

Detection can identify individual items of content. It does not capture how repeated exposure, recommendations, and community context shape risk to young users over time.

Much of this content is designed to remain below enforcement thresholds. It is engaging enough to sustain attention, but careful enough to avoid removal when assessed in isolation. The harm develops in the gap between what systems reward and what policies prohibit.

Addressing this requires a different set of questions. Not just, “Does this post violate our rules?” but, “What pattern is developing here? How is exposure accumulating over time? And what response is proportionate before this risk becomes visible through an enforcement inquiry, public scrutiny, or internal escalation?”

What early intervention actually requires

Phillips points to a consistent pattern in Resolver’s assessments of this harm category: the intervention points that matter are rarely the obvious ones. They tend to be earlier and subtler, and they require analysis that keyword detection and post‑incident review cannot always provide.

Intelligence on how these ecosystems develop. Misogynistic communities do not stay still. When platforms act on the most visible spaces, activity often migrates to less‑scrutinized features, adjacent platforms, or rebranded community structures. Understanding where activity moves, and how language and framing adapt to evade detection, requires analysis that extends beyond a platform’s own systems. Without it, enforcement action on one surface simply displaces the harm to another, leaving no documented basis for knowing that shift occurred.

“Language that originated within explicitly misogynistic communities — including terminology from incel spaces — has progressively entered the mainstream internet lexicon,” Phillips says. “That creates a significant analytical challenge. Platforms must assess not just whether a term appears, but the context and intent behind its use. Broad policy responses become harder to apply consistently, and enforcement risks falling behind rapidly evolving behavior rather than addressing its root causes.”

Human intelligence that recognizes early-stage signals. These communities actively adapt to evade detection, which means the signals that matter most are adversarial as well as contextual and cultural. They require analysts who understand not just what content says, but what it is doing within a specific community at a specific moment. Platforms that rely solely on automated detection are likely to encounter this harm only after the narrative is established and the audience already shaped.

Documented risk reasoning that can be explained. Early intervention also depends on documented risk reasoning. That means a structured account of what was understood, when it was understood, and why decisions were made — not just a record of what content was removed. When regulators, boards, or legal teams ask questions later, the answer needs to exist before the question is asked. Attempts to reconstruct decision‑making after the fact create not just operational risk, but legal exposure.

How Resolver bridges the intelligence gap

Resolver’s human intelligence capability is designed for the gap between detection and decision. Our analysts work outside platforms’ own systems to identify how harmful communities and narratives develop, before they become visible through standard detection signals.

On misogyny and VAWG harms, this means tracking how recruitment pathways evolve, how language and framing shift to evade detection, and how community dynamics develop from ambient hostility toward coordinated targeting or offline harm.

The goal is not to replace platform moderation. It is to make the harm pathway visible, ideally early enough that response is proportionate and clearly documented.

If a regulator, internal investigation, or board inquiry asks when a platform first identified a harm pathway like this, the answer cannot begin with the moment a journalist asked questions. Under the OSA, that answer is expected to already exist — with documented analysis showing how the risk was recognized, assessed, and monitored as it developed.

The documentary has done its job. Now platforms need to do theirs — before the next one is made about them.

See how Resolver helps platforms map harm pathways and understand risk in practice.

About the authors:

Nadine Araksi is content marketing manager for Resolver’s Trust & Safety division. She brings a background in journalism and editorial leadership to developing content that connects complex online safety and regulatory challenges to the decisions platforms, legal teams and policymakers need to make.

Mark Phillips is subject matter expert (Hate) at Resolver Trust & Safety, specializing in hate speech and online radicalization. His work spans far-right extremism, white supremacy, extreme nationalism and LGBTQ+ hate speech, with a particular focus on how these risks manifest and interconnect across global platforms. He is trained in Structured Analytical Techniques, OSINT and dark web analysis, and contributes to Resolver’s intelligence reporting on emerging and hybrid online threats.