I went into Compliance Week’s The Leading Edge forum expecting thoughtful discussion, and that’s exactly what I experienced. What stood out was the consistency of what people were focused on, no matter where the discussion happened.

AI came up early and often at the conference. Not as hype, but as a working topic tied to real operating pressure. Compliance teams are taking on more responsibility as AI enters daily workflows while resources remain tight, which shapes how leaders think about priorities and approvals.

Across sessions and informal conversations, people kept returning to the same practical questions. How does a specific AI use fit into an existing process? Where does it reduce manual effort? Where does it introduce risk that still requires review? The focus stayed on day-to-day execution, not abstract potential.

That same tension shaped my session on building a business case for AI in compliance. The discussion centered on how teams move from interest to approval without weakening control. It addressed how leaders evaluate AI inside regulated workflows, how accountability remains clear, and what needs to be solved before efficiency gains carry weight.

Below, I’ll break down those considerations, starting with how teams can frame AI initiatives to their current priorities.

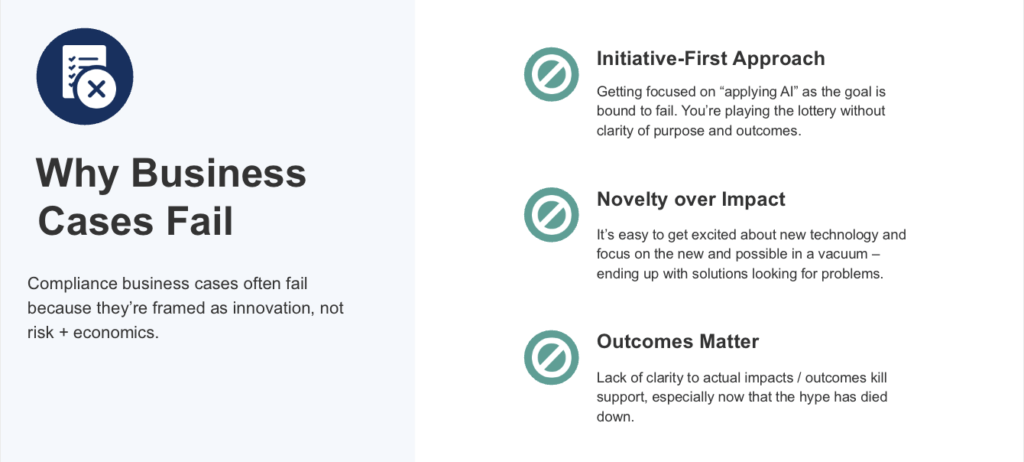

Why a business case for AI in compliance might stall before approval

Most AI proposals stall because the case for change doesn’t match how decisions are made.

One issue is framing. Many proposals lead with capability before clearly defining the problem. When the pressure isn’t specific, the value feels abstract. Leaders responsible for regulated programs want to see the operational gap first. Only then can they evaluate how review, responsibility, and defensibility are affected.

Another problem is distance from real work. Time savings often get described in broad terms. If it’s not clear which tasks shrink, which stay the same, and where effort shifts, leaders pause. Vague gains feel risky when teams already operate close to capacity.

Oversight is where most cases slow down. As soon as automation enters a workflow, questions surface about review points and escalation. If those paths aren’t clear, confidence drops quickly.

A stronger business case for AI in compliance addresses these concerns head-on. They start with pressure teams already feel. They explain how work changes in practical terms. When control remains visible, leaders can assess risk and move the conversation forward.

How expanding scope is shifting risk tolerance in AI decisions

The current scope for ethics and compliance leaders looks very different than it did a few years ago. They’re handling more reports, more review expectations, and more scrutiny around how decisions get made. None of that came with extra capacity.

That context matters when AI enters the conversation. Most teams aren’t looking for another initiative. They’re trying to keep pace without letting things slip through the cracks. When an AI proposal doesn’t acknowledge that pressure, it feels disconnected from reality.

A business case can’t treat AI as a side project. It has to show how it fits into work teams already own. Leaders want to understand whether it helps absorb added responsibility, or quietly adds another layer to manage.

One example is intake and review. As volume increases, consistency becomes harder to maintain. If AI can help structure information earlier, flag higher-risk cases sooner, or reduce follow-up work, that’s useful. If it creates new review steps or unclear handoffs, it becomes a liability.

That’s why expanding scope raises the bar for approval. Leaders aren’t just asking what AI does. They’re asking whether it makes an already stretched program easier to run, or harder to explain later.

|

Read How Jabil Strengthened Their Speak-Up Culture and Compliance Program with ResolverLearn how Resolver helped Jabil scale and strengthen their ethics and compliance program and speak-up culture — ensuring every report receives the attention it deserves and driving accountability at every level. |

How accountability holds as automation increases

When AI enters a compliance workflow, the first question leaders ask is simple: Who owns the outcome if something goes wrong?

That question doesn’t come from resistance to automation. It comes from experience. In regulated programs, accountability can’t be implied. It has to stay clear even as work moves faster.

Problems start when AI feels like it’s making decisions on its own. If a proposal can’t explain who reviews exceptions, who signs off, or where judgment still applies, leaders hesitate. Not because the tool is flawed, but because responsibility feels harder to trace.

The approach that works is more straightforward. AI needs to map to current workflows, not introduce parallel ones. It should reduce bottlenecks that already exist, such as incomplete intake or delayed triage, rather than add new review layers.

When automation fits inside the existing process, ownership doesn’t shift. The same roles remain responsible for review and final decisions. AI helps surface issues sooner, but it doesn’t become a new decision-maker in the middle.

Responsibility stays where it already lives, and the workflow becomes easier to run under pressure.

How leaders decide if AI is worth it

Once accountability is clear, leaders move on quickly. The question becomes less about ownership and more about disruption. They want to know what changes in practice, not in theory.

When leaders evaluate an AI proposal, they tend to pressure-test the same areas:

- Impact on existing work: They look for a clear explanation of which steps take less effort and which remain unchanged. If that shift isn’t visible, efficiency claims feel abstract.

- Clarity during review: Leaders want to understand how decisions get reviewed once automation is involved. If review paths become harder to explain, confidence drops.

- Effect on prioritization: As volume increases, teams need consistency. Leaders pay attention to whether AI helps focus attention or adds another layer to manage.

- Downstream effort: Any time saved early in the process needs to hold up later. If speed upfront creates cleanup work later, the tradeoff doesn’t work.

When reports come in missing key details, teams spend time following up before assessment even begins. Using AI to prompt for context earlier reduces that back-and-forth and gives reviewers a clearer starting point.

The same applies during triage. As volume increases, teams need a consistent way to surface higher-risk cases without re-evaluating every report from scratch. Clearer signals help teams focus attention sooner and apply judgment where it matters most.

This is how leaders assess tradeoffs. They look for changes that reduce follow-up, shorten review cycles, and make decisions easier to explain later. If execution details support that outcome, efficiency becomes easier to justify.

|

See Resolver’s Whistleblowing & Case Management Solution in ActionOur solution automates workflows, ensures secure and confidential hotline reporting, and speeds up compliance investigations — all in one platform. Protect whistleblowers, reduce risk, and resolve cases faster while meeting global regulations. |

Making AI work inside your ethics and compliance program

Ethics and compliance teams feel pressure most at intake and review. Structured reporting helps fix that. Prompting for missing details at submission reduces clarification emails and shortens early review. Reviewers start with clearer information and spend less time reconstructing events.

As volume increases, prioritization matters more. Clear signals help teams focus attention earlier instead of rechecking each case. Review stays consistent, even when workloads spike.

Resolver’s Whistleblowing & Case Management Software is built around that reality. Reporting, case handling, and review stay connected in one workflow, so ownership remains clear as expectations increase.

If you’re evaluating AI and need to see whether a business case actually holds up, speak with our team to see how intake, review, and accountability work together in practice.