The Global Standard for VAWG Governance: Why Intent is the New Metric for Platform Safety

Regulators aren't just reviewing what you removed. They're looking at what your risk assessment covered — and when.

Editor’s note (April 30, 2026): This piece was updated to revise previous references to the UK Crime and Policing Bill, which received Royal Assent on April 29, 2026 and is now the Crime and Policing Act 2026.

For years, Violence Against Women and Girls (VAWG) existed primarily as a fragmented policy challenge rather than a unified regulatory obligation. While industry leaders have long recognized the severity of these harms, the response was often siloed — split across different teams at platforms, managing Hate Speech, Violent Extremism, Harassment, or Graphic Violence. This internal fragmentation made it difficult to address VAWG with the same structural rigor applied to other priority illegal content.

In its most explicit form, VAWG is distinct and distressing, but these acts sit on the far end of a complex spectrum of behaviors that are increasingly implicit and nuanced. The challenge for platforms has been navigating this gray area, where bad actors obfuscate their intent for “off-site” harm to less protected spaces. Historically, the legal framework required to aggregate these diverse behaviors into a single, documented governance category simply didn’t exist. That era of ambiguity ended in early 2026.

In July 2024, the UK took the step of formally declaring VAWG a national emergency. While specific to one jurisdiction, the data underpinning that decision is illustrative of a global crisis. In England, research from the Open University indicates that over 10% of women have experienced digital abuse — a figure that mirrors rising global trends in technology-facilitated violence. This “whole-system” response, spanning criminal justice and education, has become the blueprint for a new international regulatory baseline. The scale of the harm is no longer in doubt; the global question is whether the compliance infrastructure exists to contain it.

That’s changing fast, and in more than one jurisdiction at once. Across the UK, EU, and US, a sequence of legislative developments between November 2025 and May 2026 has moved VAWG governance from policy aspiration to formal legal obligation. The gap between harmful and illegal is closing.

For General Counsel and compliance teams, the question is no longer whether VAWG requires formal governance infrastructure. It’s whether what they have is sufficient to demonstrate it.

Why the 2025-2026 sequence changes everything

What we are witnessing is not a series of one-off legislative moments, but a consistent pattern across multiple jurisdictions. Platforms that have treated each development as a separate compliance notification — update the policy, brief the team, move on — have missed the direction of travel.

| May 2024 | EU Directive 2024/1385 | Establishes criminal law obligations covering NCII, cyberstalking, and cyberharassment. Platforms operating across the EU are already within scope ahead of June 2027 transposition. These standards are increasingly adopted as best practice globally. |

| July 2024 | UK VAWG National Emergency | UK police chiefs and the College of Policing formally declare VAWG a national emergency. Reactive enforcement is no longer adequate — the expectation of a whole-system response, spanning criminal justice, health, and education, is now on record. |

| October 2024 | G7 Safety by Design Principles | Introduces global expectations for mitigating AI-generated VAWG, embedding proactive safety design into platform governance. |

| May 2025 | US Take It Down Act | Enacts a strict 48-hour NCII removal requirement, setting a global benchmark for response times. |

| November 2025 | Ofcom VAWG Guidance | First comprehensive regulatory framework defining how VAWG risk is assessed under the Online Safety Act, establishing formal evaluation criteria. It provides the technical criteria now being studied by regulators in Australia, Canada, and the EU. |

| Jan–Mar 2026 | Active Enforcement Waves | Ofcom issues over £3.5M in fines, including against an AI nudification platform. Signals active enforcement, not future intent. |

| February 2026 | Data (Use and Access) Act 2025 & Deepfake Criminalization | Criminalizes both creation and request of NCII, including AI-generated deepfakes. Major jurisdictions (UK, US, EU) move in lockstep to criminalize the supply of AI “nudification” tools, shifting the focus from individual content to technological facilitation. Platforms that host or facilitate this activity are operating in a changed criminal law environment from this date. |

| March 2026 | Ofcom–ICO Joint Statement (DRCF) | Confirms age assurance can meet both online safety and data protection obligations. Removes the argument that privacy and safety requirements conflict, and that compliance can therefor be deferred. |

| April 2026 | UK Crime and Policing Act 2026 | NEW: The Crime and Policing Act 2026 received Royal Assent on April 29, 2026, activating a strengthened UK enforcement window. Key provisions include criminal liability for non‑consensual intimate image (NCII) sharing, court‑ordered image deletion, a 48‑hour platform removal duty, criminalisation of AI deepfake NCII tools, new pornography offences, and personal criminal liability for executives who fail to comply with Ofcom NCII decisions. |

| May 2026 | Global Enforcement Window | US and UK online safety compliance deadlines converge. With the UK Crime and Policing Act 2026 receiving becoming law on April 29, platforms without documented cross‑border governance processes now face immediate and escalating enforcement risk. |

Taken individually, each looks like a discrete compliance event. Taken together, they describe a regulatory environment where VAWG governance is documented, assessable, and in some jurisdictions personally enforceable. The question for General Counsel isn’t which development requires a response. It’s whether the governance infrastructure exists to respond to all of them.

A risk assessment is a living document, not a homework assignment. If your first entry regarding VAWG radicalization is dated the day a law receives Royal Assent, you have effectively documented a period of prior oversight.

VAWG governance has a gap

VAWG isn’t a single category of conduct. It’s a spectrum. The most dangerous exposure for platforms sits in the gray zone — content that is harmful and escalatory but doesn’t meet the threshold for a straightforward removal decision.

Under Article 34 of the EU Digital Services Act (DSA), regulators now scrutinize gender-based violence as a “Systemic Risk.” This means that for any platform with EU users, the question is no longer just “Did you remove it?” but “How does your recommender system or algorithmic design amplify this harm?”

Regulators (such as Ofcom and the EU Commission) are concerned with whether platforms assess the full risk environment — including content that operates below the threshold of obvious illegality. Misogynistic rhetoric that precedes abuse or coordinated harassment designed to look organic, matters deeply to a regulator assessing if your risk infrastructure was adequate.

VAWG isn’t always loud and explicit. It’s often quiet and implicit. Bad actors seek out the cracks in platform defences like water — diversifying, changing tack, moving to less safe spaces. The compliance infrastructure has to be designed to see the full spectrum, not just the loudest signals.

Why “intent” is the new online safety compliance metric

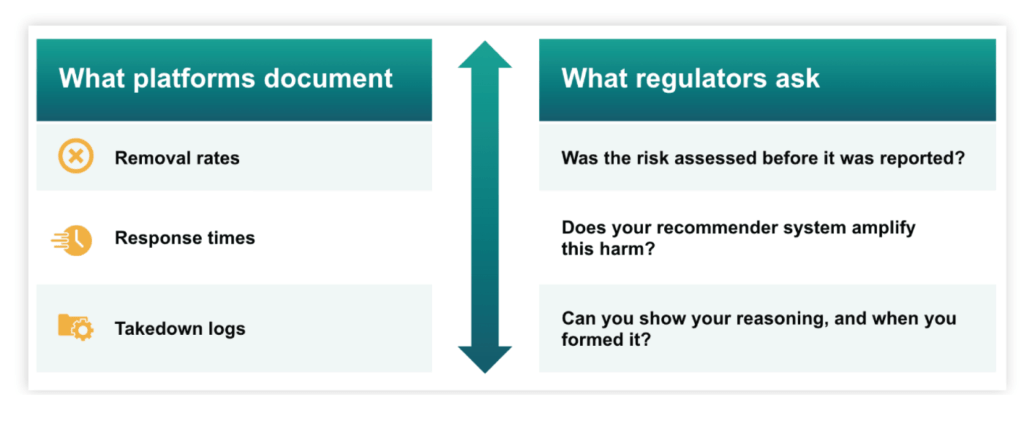

It’s all too easy to build governance infrastructure around the wrong question. The focus has historically been on unit action — removal rates, response times, takedown logs. Regulators are now asking a different question entirely.

For a compliance officer, this creates a “documentation gap.” If a regulator asks how you assess the risk of nudification tools, or technology-facilitated coercive control, or the escalation pathway from misogynistic content to targeted abuse — “we removed it when reported” doesn’t cut it. The question isn’t whether you acted. It’s whether you understood the risk before it was reported, and whether you can show that you did.

True governance requires evidence-based Trust & Safety intelligence that delivers the ability to:

|

Identify harm pathways: Not individual pieces of content in isolation, but the routes through which low-severity signals escalate into enforcement-visible outcomes. Regulators expect you to understand how a search for “fitness” leads a young boy into an incel rabbit hole. |

|

|

Utilize localized SME intelligence: Harms are social constructions. A Manosphere signal in the US looks very different from a traditionalist radicalization signal in the Middle East or South Asia. Effective online safety compliance requires greyscale expertise — analysts who can distinguish between a cultural meme and a coordinated stalking campaign across 50+ languages. |

|

|

Map bad actor networks: “Shop window” accounts — profiles that look compliant on-platform while orchestrating abuse elsewhere — are a documented feature of VAWG-related harm. Enforcement decisions made at the content level, without visibility into those networks, won’t reflect the actual risk architecture. |

Without that intelligence, your governance process has nothing to stand on.

What adequate VAWG governance actually requires

When regulators ask whether a technology provider assessed the risk of VAWG, they’re not looking for a takedown log. They want evidence that the platform understood the nature of the risk, built proportionate controls, and updated its assessment as the threat environment changed.

In practice, that means a risk assessment covering the full spectrum of VAWG-related conduct — not just the most explicit categories. It means documenting how that assessment informed enforcement decisions, so the reasoning is on record when a decision is questioned. And it means a review cadence that reflects the pace of regulatory change. A risk assessment written to pre-November 2025 standards already has a gap.

VAWG governance in 2026 requires more than a keyword list. What constitutes coercive control or incitement shifts across borders, faiths, and generations. That’s where automated tools fail and where greyscale expertise — analysts who can distinguish between a cultural meme and a coordinated stalking campaign — becomes the difference between adequate governance and documented inadequacy.

Much like the EU’s recent transpositions and the US federal crackdown, the Crime and Policing Act in the UK is part of a global pincer movement. Platforms that have built their infrastructure to treat VAWG as a formal risk category already reflect the standard regulators are now enforcing. For those that haven’t, these bills and laws are not just local updates; they are symptoms of a rapidly closing compliance window. Whether through image‑deletion orders in London or systemic risk audits in Brussels, the expectation is the same: show your work.

Securing the ecosystem: Moving to proactive intelligence

By shifting from a model of waiting for reports to a model of proactive online safety intelligence, platforms can map the cracks before the water flows through.

The regulatory sequence is now active, and the window to get ahead is rapidly closing. Platforms still working from pre-2025 risk assessment frameworks have a gap — one that becomes visible at the point of regulatory scrutiny, not before.

Resolver works with platforms and online service providers to build the governance infrastructure that connects Trust & Safety operations to formal regulatory obligations. We empower you to move from reactive moderation to proactive, audit-ready security.

Don’t wait for a regulatory audit to find the cracks. Contact Resolver today for a gap analysis of your VAWG governance infrastructure.

Learn more about how we support global leaders in online safety compliance.

More from Dr. Paula Bradbury: