The Rise of Synthetic Propaganda – Gen AI’s growing threat to election integrity

Introduction

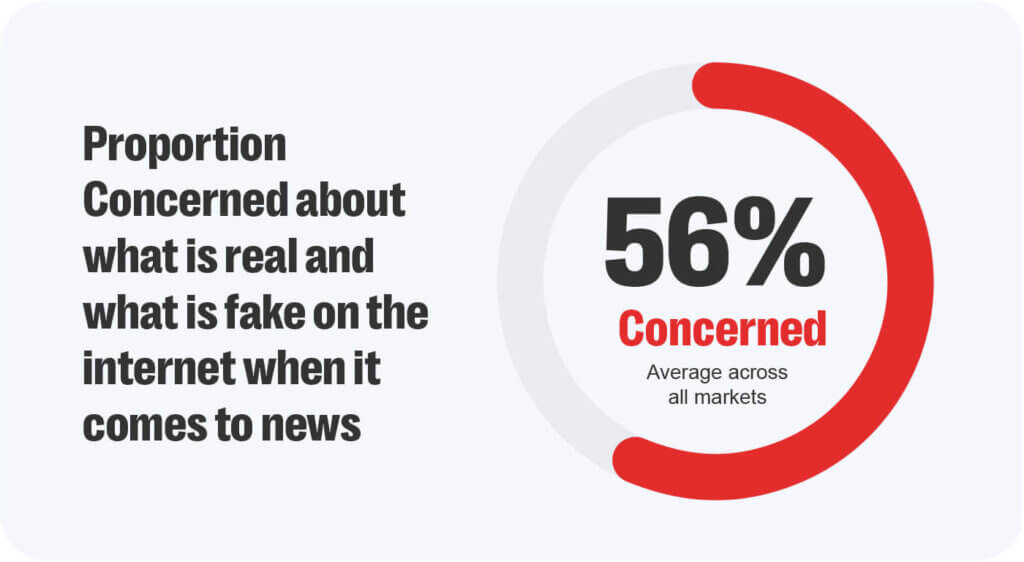

The accessibility of sophisticated tools employing Generative AI (Gen AI) combined with the near-instantaneous spread of information across social media platforms has fuelled public anxieties over how to safeguard democratic discourse and electoral integrity in an age of AI-powered disinformation.

Purveyors of falsehoods are increasingly leveraging open-and closed source models to create synthetic audio, video, images and text designed to manipulate voter perceptions, disparage political opponents, manufacture public support for particular policies or politicians and undermine the legitimacy of elected governments. While the use of rumors and speculation to achieve political or cultural goals is not new, rapid advances in the field of Gen AI are making it easier and cheaper to create realistic and highly personalized synthetic content at scale.

An inflection point for the misuse of Gen AI

With more than 80 states set to hold elections in the coming year, 2024 represents a significant inflection point for the use of Gen AI in disinformation campaigns targeting voters across the globe. Rising political polarization, declining levels of public trust in traditional sources of information, and a larger proportion of voters using online platforms as their primary means of consuming and disseminating political narratives, have created an information environment that is highly susceptible to the spread of false and polarizing narratives online.

(Source: Reuters Digital News Report 2023)

While experts have observed malicious actors leveraging AI-generated images in influence operations targeting voters since at least 2019, recent advances in the field of Gen AI have enhanced the output quality of the generated content. As a result, bad actors have begun to increase their reliance on this technology to generate content tailored to specific demographics, interests and languages.

In January 2024, the World Economic Forum (WEF) published the Global Risks Report 2024 listing ‘mis-and disinformation’ as the new leader in its top 10 risk rankings for the coming two years. The report highlighted how access to Gen AI had ‘already enabled an explosion in falsified information’ and warned that synthetic content will be used to ‘manipulate individuals, damage economies and fracture societies in numerous ways’.

Reiterating this threat, a recent study published in PNAS Nexus concluded that malicious actors are likely to leverage Gen AI in daily influence operations targeting several significant global elections over the coming year. The same investigation also mapped the spread of false narratives across 23 online platforms ranging from larger platforms to smaller niche online communities and discovered that inauthentic accounts employing basic AI tools to ‘generate content continually’ would be able to ‘quickly and widely spread this content’ across the platform landscape.

The porous nature of the online informational environment can also complicate efforts by platform moderators seeking to mitigate the harms associated with synthetic disinformation. According to Richard Stansfield, Mis-and Disinformation Lead at Resolver, a Kroll business: “Our observations around elections and geo-political conflicts such as the war in Ukraine, is that while imagery and video based AI content is relatively simple to reveal and debunk, audio and text-based fakes can provide a significant challenge for platforms.”

These challenges are likely to magnify around elections and other moments of national significance due to a spike in the volume of generated content and the disparity in resources and infrastructure dedicated to Trust and Safety between larger mainstream platforms and smaller alt-tech communities.

Gen AI powered disinformation campaigns on the rise

At present synthetic media makes up a smaller proportion of the falsified content amplified across the informational environment. Traditional forms of deception including miscontextualized comments and videos, photoshopped images, targeted harassment campaigns including the use of hate speech, and coordinated inauthentic activity are still favored by state and state-affiliated actors seeking to distort voter perceptions.

However, as usage of the technology becomes more widespread, generated content will be deployed to influence voters during elections and moments of political crisis. In June 2023, an AI-generated image showing a woman facing off against riot police was used to bolster support for former Prime Minister Imran Khan amidst an escalating political stand-off with the military establishment in Pakistan. Shortly after, in October two deepfake audio clips that sought to disparage Labour party leader Sir Keir Starmer were amplified across social media ahead of the party conference in the UK. A month later, in December, a robocall impersonating U.S President Joe Biden targeted voters in New Hampshire telling them not to vote in the state’s presidential primary election.

According to Richard Stansfield, “The complexity of the misuse of Gen AI for political disinformation also increases as we see countries intentionally incorporate this technology into political campaigns.” In South Korea, Deepfake technology was employed by then-Presidential candidate Yoon Suk-yeol to create a digital avatar dubbed “AI Yoon” in a bid to appeal to younger voters. While in India, the technology was used to resurrect M. Karunanidhi, the late leader of the Dravida Munnetra Kazhagam (DMK) for use in political campaigning ahead of the 2024 Lok Sabha elections.

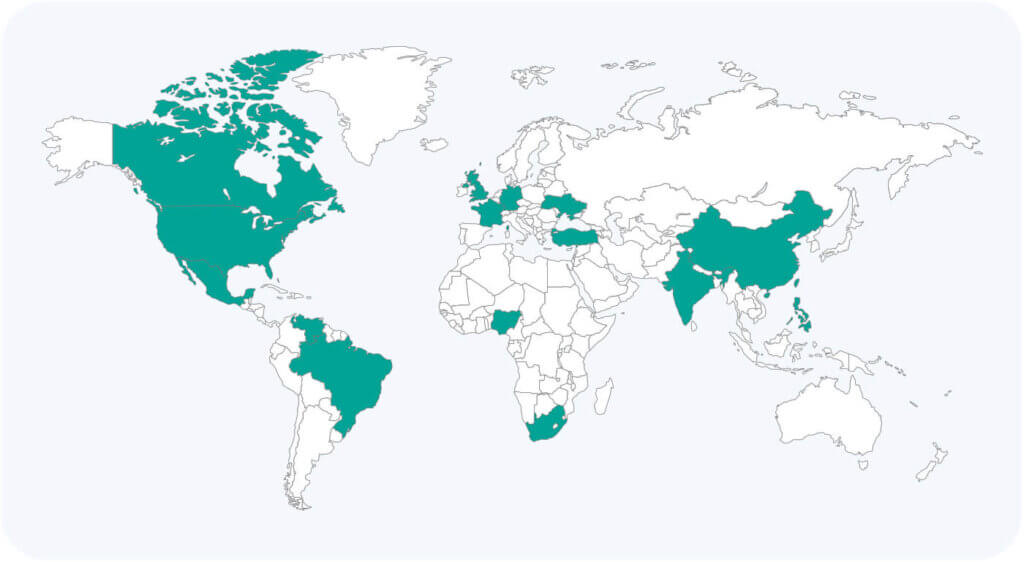

(Source: Freedom on the Net 2023 Report)

According to the latest Freedom on the Net 2023 report compiled by Freedom House, over the past year Gen AI tools were utilized in at least 16 countries to sow doubt, smear opponents, or influence public debates.

Exacerbating the “Liars Dividend”

Conversely, the growing use of generated content in disinformation campaigns has also accelerated the phenomenon known as the “liars dividend”. The term was coined by two legal scholars Cesney and Citron to represent how ‘liars aiming to dodge responsibility for their real words and actions will become more credible’ as the public grows more educated about the dangers posed by synthetic content. In this manner, greater public awareness of the capabilities offered by Gen AI has led to increased levels of skepticism regarding the authenticity of information disseminated online.

Going forward, unscrupulous political actors will be able to dismiss legitimate scandals as being AI-generated fabrications. In April 2023, a series of audio recordings of Palanivel Thiagarajan, the Minister of Information Technology and Digital Services for the south Indian state of Tamil Nadu, were amplified on social media by his political opponents. The audio clips allegedly revealed the politician accusing his own party members of engaging in corruption. Despite the lawmaker dismissing the veracity of these recordings, an independent forensic analysis of the recordings revealed that at least one of the two audio clips appeared to be authentic.

Meanwhile, a recent survey experiment conducted by the University of Purdue surveyed over 15,000 American adults to determine whether casting true scandals as “misinformation” made voters more likely to support them. This analysis found that furthering ‘claims of misinformation’ helped ‘raise politician support across partisan subgroups’.

Conclusion

The misuse of Gen AI technology poses several credible risks to the integrity of democratic processes in the near term. These risks include enhancing the existing capabilities of malicious actors engaging in influence operations, lowering the barrier to entry for those seeking to craft disinformation campaigns and undermining public trust in democratic discourse including allowing bad actors to dismiss legitimate oversight by casting doubt over the authenticity of the incriminating evidence.

Mitigating the threat posed by AI-powered disinformation will require collaboration across a wide variety of key stakeholders including governments, social platforms, commercial Gen AI services, media organizations and civil society at large. These interventions should focus on increasing digital literacy regarding the capabilities offered by Gen AI technologies, regulating output development, distribution and the swift detection of generated content

Our Platform Trust and Safety solution offers a fully managed service to enhance community safety with integrated content, bad actor detection, and external intelligence, offering partners comprehensive protection against emerging online threats including mis-and disinformation. Working with many of the world’s leading online platforms, Resolver, a Kroll business also provides red teaming exercises, strategic intelligence reporting and rapid alerting of emerging threats including the misuse of their tools to generate political mis-and disinformation.