How AI Is Being Used in GRC: 5 Practical Patterns for Risk & Compliance Teams

Get a practical look at how AI fits into real GRC workflows with the five patterns reshaping how risk and compliance teams manage regulatory change, reporting, and operational complexity.

Running a mature compliance program takes real expertise. Knowing which regulatory changes will affect your controls, where your coverage gaps are before an auditor finds them, how to give leadership meaningful visibility without spending weeks building a report — that’s hard-won knowledge. The frustration most compliance leaders feel isn’t about the complexity. It’s about how much time goes into work that doesn’t reflect it.

The numbers back that up: 60% of compliance teams need several days to produce a single audit or regulatory report, and 61% are understaffed or working solo. For 32% of teams, keeping up with regulatory change is their top challenge. Incorporating AI in compliance programs can ease that pressure. It helps teams review regulatory updates faster, flag what applies, and structure reporting without rebuilding it each time.

At the ProSight Women in Financial Services Summit, Resolver’s Yoga Huo addressed exactly that. In her session, “Operationalizing AI in GRC”, she broke down where AI creates genuine value in a GRC program and how it works in practice.

This blog outlines how AI is already helping teams reduce operational friction, improve consistency, and focus more time on analysis and decision-making.

Discover Resolver's solutions.

How is AI being used in GRC?

AI in compliance refers to the use of machine learning and language models to support tasks like regulatory analysis, control mapping, reporting, and risk classification.

GRC work is document-heavy by nature. Regulations, policies, controls, SOC reports, evidence packages all arrive from multiple sources, in multiple formats, on overlapping timelines. After all, AI models were built to read, compare, summarize, and structure large volumes of content faster and more consistently than manual processes allow.

What’s emerging across risk and compliance functions isn’t a single AI use case. It’s a set of repeatable patterns, each one mapping to a specific point where effort tends to accumulate. Together, they form a practical picture of how AI in compliance fits into existing workflows.

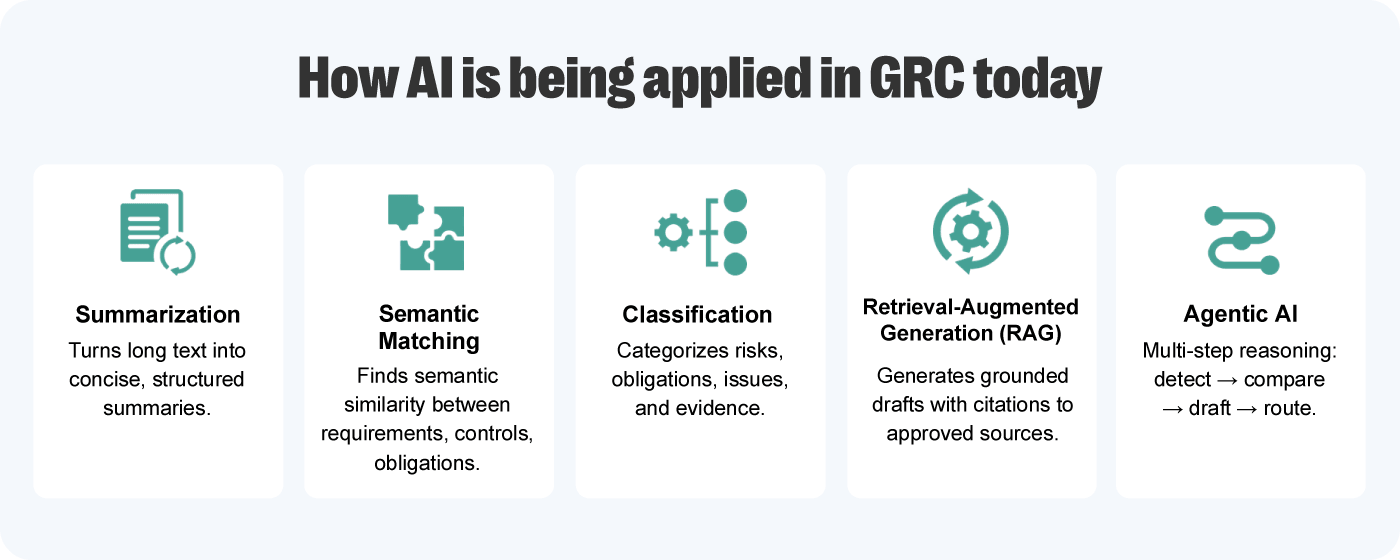

The 5 patterns shaping AI in compliance

As Huo explains, “AI isn’t one capability, it’s a set of approaches that map to different types of work”. When thinking of incorporating AI in GRC programs, it’s important to consider it as a set of approaches that map to different types of work, each one addressing a specific point where effort tends to accumulate. Below are five patterns that help shape that view.

1. Summarization

A large portion of GRC work begins before any decision is made. Teams spend time reviewing regulatory updates, enforcement actions, and internal documentation just to establish a baseline understanding.

That effort is necessary. But at scale, it slows everything down. The longer it takes to process source material, the longer it takes to move into analysis, discussion, and action.

Summarization changes that starting point. Instead of working through dense documents line by line, teams begin with structured outputs that surface key information while preserving context. The work doesn’t disappear, but it becomes more manageable.

For GRC teams, this shows up most clearly in:

- Regulatory updates and guidance reviews

- Enforcement notices and commentary

- Internal policy and control documentation

What changes is not just speed, but alignment. When teams start from the same distilled version of information, there’s less variation in interpretation and fewer delays caused by rework.

What this means in practice:

- Faster transition from intake to analysis

- More consistent understanding across stakeholders

- Reduced effort spent on low-value review work

This shift seems small, but it changes how work begins. When teams can move past intake faster, they create more space for the analysis and judgment that actually drive decisions.

Stay Ahead of Regulatory Change with ConfidenceDiscover how Resolver’s AI-powered regulatory change management solution helps compliance teams efficiently track, assess, and manage regulatory changes. |

2. Semantic matching

GRC environments rarely operate with clean, standardized language. Controls, risks, and obligations often describe similar requirements in different ways, depending on the framework or source.

That creates friction when teams need to map across frameworks or assess coverage. What appears different may be equivalent. What appears similar may not be.

Semantic matching addresses this by focusing on meaning rather than wording. Rather than comparing keywords, it evaluates how concepts relate to each other.

For GRC teams, this shows up most clearly in:

- Control mapping across frameworks

- Regulatory obligation alignment

- Risk and control harmonization

The impact is less about automation and more about consistency. Manual mapping introduces variation, especially when judgment is required. Semantic models apply the same logic across all items.

What this means in practice:

- Faster mapping across large datasets

- Reduced inconsistency between analysts

- Greater confidence in coverage and alignment

Over time, that consistency compounds. As frameworks, regulations, and controls evolve, having a reliable way to connect them becomes less of a one-time effort and more of an ongoing advantage.

3. Classification

As GRC programs scale, the challenge shifts from understanding individual items to managing the flow of them. Regulatory updates, incidents, risks, and issues arrive continuously and need to be categorized before action can happen.

In many cases, that categorization still depends on manual review. It works at low volume, but it becomes a bottleneck as inputs increase.

Classification models address that step directly. They assign categories, tags, or ownership based on how incoming data is interpreted, allowing items to move into the right workflows without delay.

For GRC teams, this shows up most clearly in:

- Regulatory triage and routing

- Risk and issue categorization

- Incident intake and tagging

The change is subtle but important. When categorization happens quickly and consistently, the rest of the workflow moves more smoothly.

What this means in practice:

- Reduced delays in triage and routing

- More consistent categorization across teams

- Lower risk of missed or misrouted items

When inputs are structured correctly from the start, downstream workflows require less correction and less coordination.

|

4 Signals You Need AI in Your GRC ProgramAI is exposing gaps in fragmented governance. Learn 4 signs you need an integrated GRC program to support risk, compliance, and audit. |

4. Retrieval-augmented generation (RAG)

In GRC, outputs need to be defensible. Content must align with internal policies, controls, and regulatory requirements, which makes generic AI outputs difficult to use in practice.

RAG addresses this by grounding outputs in approved internal content. Instead of generating responses from general knowledge, the model retrieves relevant internal sources and uses them to produce aligned outputs.

For GRC teams, this shows up most clearly in:

- Policy drafting and updates

- Control development and refinement

- Gap analysis against new regulations

Instead of starting from a blank page, teams begin with content that is already aligned to their environment. Huo described this as “removing the work required just to get to a starting point”.

What this means in practice:

- Stronger alignment with internal policies and controls

- Built-in traceability for audit and review

- Fewer revision cycles across stakeholders

Teams can now align on where content came from, and focus on improving it. The result is a faster (and more productive) review process.

5. Agentic AI

Some GRC tasks are simple. Others require multiple steps: reviewing inputs, applying logic, assigning categories, triggering workflows, and documenting outcomes.

While those steps are manageable on their own, they create operational friction when combined — especially in high-volume environments.

Agentic AI connects those steps into a single flow. Instead of stopping at one output, it moves through a sequence of actions based on predefined logic.

For GRC teams, this shows up most clearly in:

- Incident and case intake

- Workflow routing and escalation

- Multi-step investigation processes

The value compounds across the workflow, because each step no longer depends on manual intervention to move forward. Tasks don’t pause between steps, and the same logic is applied consistently each time.

What this means in practice:

- Faster end-to-end process execution

- Reduced manual handoffs between steps

- More consistent and auditable workflows

As volume increases, that consistency becomes critical. Processes that once required constant oversight can run more reliably, with teams stepping in where judgment is needed, not where repetition used to be.

|

AI Features in Compliance Software: What GRC Teams Should Look For to be Policy-ReadyLearn how to spot the AI features that support real review, approval tracking, and audit-ready records GRC teams can stand behind. |

Why AI initiatives stall in GRC (and what to do about it)

These five patterns don’t replace human judgment. That’s not a caveat — it’s the point.

Implementing AI in compliance programs frees up capacity for the work that actually demands professional judgment: interpreting findings, making decisions, advising stakeholders, governing programs, and communicating risk to leadership.

Huo observed that, across organizations, there isn’t concrete opposition to AI, but there is an information gap. Most proposals arrive too late, often half-built, and without the artifacts approvers need to evaluate the risk. Legal, risk, and audit teams don’t default to “no” because they’re resistant. They default to “no” when they don’t have enough information to say “yes”.

Approvers need clarity on six things:

- The business problem, or why AI is the right fit

- What the AI will and won’t do

- What data it touches and how it’s handled

- How the use case is classified by risk level

- What controls and guardrails are in place

- Evidence that the feature won’t breach policy.

A controlled pilot with clear success criteria reduces the size of the commitment and gives approvers something concrete to evaluate before a full rollout. Building that documentation before building the proposal is how you make it easier for internal teams to say yes.

What AI in GRC looks like on a platform built for it

AI only creates value when it fits into the workflows teams already rely on. That’s where many implementations fall short — the capability exists, but it sits outside the system where the work actually happens.

Resolver’s GRC platform brings these patterns into the workflows where compliance and risk teams already operate. That way, teams don’t need to rethink how they operate to benefit from automation. AI handles the structured work. Humans stay in control of the decisions.

If you’re thinking about where AI fits in your GRC program, start with your current workflows, where manual work is high, consistency breaks down, and your team’s time is going. Watch the replay of our webinar to see how teams are operationalizing AI in their compliance programs, and book a demo to see how Resolver supports that work in practice.